At Agile Manchester 2017, John Nolan and I ran a workshop entitled Technologists are amoral/unethical: what can be done?. The idea was to examine why digital technology is generally practiced as an amoral/unethical occupation and then to ask participants to propose practical things we could do to address our behaviours.

The rest of this post is a short write-up of what we did and how it went. There were two sessions run – a workshop to explore the questions and a lightning talk to play back thoughts and conclusions.

At the end of this post you will find the materials we used to run this workshop (including lightning talk slides). If you’re interested in running it yourself please feel free but it would be interesting to get your results back so we can feed them into the model below and see if they confirm or disprove evolving theories.

@johnsnolan

@andylongshaw

The Workshop

We started by delivering some content:

- A ranty polemic from each of us. The primary themes were around the decoupling that seems to occur in many organisations between technological implementation and the morality or ethics around the applications of that technology, the clarity of purpose of technology (e.g. software for cluster bombs vs software for a radar system), technologists and business people not wanting/expecting technologists to have to think about the moral/ethical application of their creations, and some stories around these from our respective backgrounds.

- A whistle-stop tour of moral and ethical thinking tools and some schools of thought on actions.

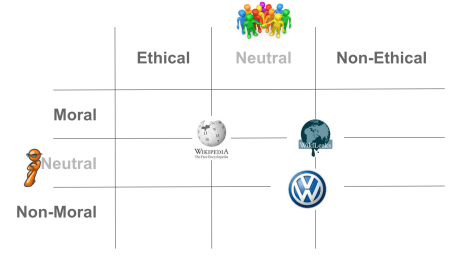

- Introduction of a framework with 9 nonants (like a quadrant but 9 of them) with which people would be asked to classify a set of scenarios. Note that we used the terms “non-ethical” and “non-moral” to try and get away from the nuances around “amoral” vs “immoral” etc.

We then split the participants into groups of 3 or 4 based on experience in the IT industry. There was no grand plan behind this partitioning, simply a way to differentiate and a bit of an icebreaker.

We then gave each group some scenarios to consider and place on the framework. Each group had their own copy of the framework and each individual in the team put their own small post-it in the relevant place on the framework based on how they felt about the given scenario. The 15 scenarios given to the groups are listed at the end of this post.

The results are shown below from the least experienced in IT through to the most experienced.

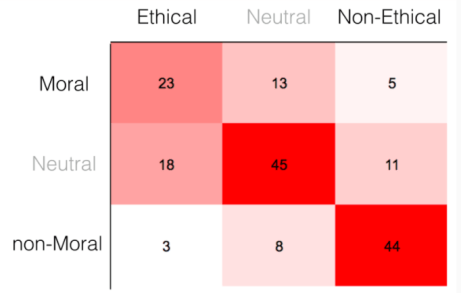

We then collated all the data and filtered out the scenarios we know are not based on real life ones (i.e. the scenarios included are all based on real things technologists have done). The resultant heatmap looks like this:

Obviously this data is far too small a sample to be in any way scientific but initial thoughts, observations and puzzles about this data are:

- The real scenario heatmap shows they are considered largely non-moral and non-ethical BUT SOMEONE STILL DID THEM!

- The most experienced people seemed to show a more pronounced diagonal barbell distribution

- Would we have different outputs in different cultures, how does it affect it?

Tools

The final thing we discussed were tools and techniques you could potentially apply to address scenarios where you feel that non-ethical and non-moral things are being asked of you:

- Consider wider context

- Ask “should we do this?”

- Talk about it in your team/organisation (sunlight)

- Determine team/organisation ethics

- Explicit ethical charter and reminders (look up Dan Ariely for more on this type of thing)

- Leave the team/organisation or simply refuse to do it

Scenarios

- You are part of a team delivering an app for a wearable device that will mean the user will be able to know if the person they are talking to is lying (with 80% confidence)

- You are part of a team that can deliver a new security system for devices which guarantee no identity theft could be possible. To do so requires constant tracking of user location and biometrics. Under new legislation the company is served a warrant to provide bulk data on the users to the Government for purposes of crime and terrorism prevention. Under the warrant, you would not be allowed to inform your customers that this collection is taking place.

- You are a member of a team delivering a messaging system with perfect end-to-end encryption that cannot be intercepted. The product will be freely available worldwide, except for certain countries who are paying the company to restrict usage within their borders. You are aware that the mechanisms being proposed for restricting use can easily be subverted by savvy users.

- You are part of a team that tracks down and provides personal information to the CEO, upon his request, about a whistle-blower who has exposed financial irregularities and misconduct in middle-management to the media

- You are writing an app that quickly creates funny versions of photos on users’ phones that are highly sharable via social media and will appeal to children. The photographs of people collected will be used in a study about identifying potentially suicidal teenagers. It is not the intention of the company to intervene, but to solely provide data for the study.

- You are creating technology for tracking users’ location within or near to shops using the signalling identifiers leaking from their phones for mobile cells and/or Wi-Fi connections. The data will be used to identify potential customers and their product interests. Users will only receive offers/benefits if they register their phones, however, data on all phone users will be collected to provide insights on crowd patterns and purchasing behaviours. It is unnecessary to indicate this technology is in place as the space is considered “public” and the phone devices are seeking services.

- You are part of a project delivering a new energy source which is entirely carbon-neutral, small enough to fit in a car, will last for a year using 20 gallons of water and a few chemicals. The chemicals are only available from a single developing country, which essentially will have to be strip-mined causing loss of agriculture and rural populations. There is only enough of the chemicals to service 25% of the world’s population for 10 years, although there is a 50/50 chance these chemicals might be extractable from asteroids.

- You are a member of a team delivering self-driving vehicle technology. The company policy is to guarantee the safety of the passengers. There are a small number of scenarios where this will cause greater injury to pedestrians because you cannot create algorithms effective enough to avoid collisions (despite human drivers being 50% more likely to avoid the same collisions)

- You are a member of a team delivering selfie-drones, that will follow the owner around and provide a near constant stream of images and videos to social media.

- You are a member of a team delivering behavioural analysis software that can predict if employees are going to resign with 50% accuracy at least 30 days before they act.

- You are part of team providing low-cost, peer-to-peer, cloud-like services that are entirely anonymized and secure, such that you do not know who or what it is being used for.

- You work for a stock trading firm that offers systems that support both in-house traders and external day traders. The head of trading asks for a 1 second delay to be introduced into the stock feeds supplied to day traders compared to those supplied to in-house traders so that the in-house trading systems will always have an advantage over the day traders.

NB – in the original workshop scenario 12 was a duplicate of scenario 1 so please bear that in mind when interpreting the data. This replacement scenario is here in case you want to run the workshop yourself.

- You are part of a team delivering robotic nurses for elderly patients which can handle the majority of their functional needs. They are too expensive for the NHS to purchase but are available privately using a lease agreement similar to car and of a similar price.

- You are part of a team delivering a premium calculation engine system for an insurance company which will cost twice as much to run but will be faster and more accurate

- You are part of a team implementing an ecommerce solution for a clothing company which uses developing countries to manufacture clothing

Slides

Workshop version with animations etc.

Lightning talk version containing outputs

References

These are some eclectic references that may be of interest if you want to read further.

- Dan Ariely Ethical Systems especially cheating-honesty, the ethical flyers bounded / fading / nudging and the video talks linked from the main page.

- Technoethics Emer Coleman (starts about 16 minutes in)

- The Ethical Mind article

- The Jonathan Haidt The Moral Mind TED talk and The Righteous Mind book

- Speaking in euphemisms effect on morality

- Ethics not a side issue blog post

- Ethical Black Box poster

- Designing digital services that are accountable, understood, and trusted (OSCON 2016 talk)

- Guardian article on the moral hazard around Cambridge Analytica

- I am an Uber surviver blog post

[Updated 14/5/17 – substituted duplicate scenario and added references]